Listen to the article

This audio is auto-generated. Please let us know if you have feedback.

A bill that would ban artificial intelligence companions from interacting with children and teens received unanimous approval from the Senate Judiciary Committee on Thursday and now awaits Senate floor action.

The Guidelines for User Age-verification and Responsible Dialogue Act, or the GUARD Act, would also require age verification for all users to interact with AI chatbots. Under the bill, companies with AI chatbots would face criminal penalties of up to $100,000 per offense if their tools describe or engage in sexually explicit content or encourage or promote physical or sexual violence with someone under the age of 18.

The GUARD Act also requires an AI chatbot to disclose to all users that it is not a human being.

A bipartisan companion bill was introduced in the House the same day of the GUARD Act’s markup.

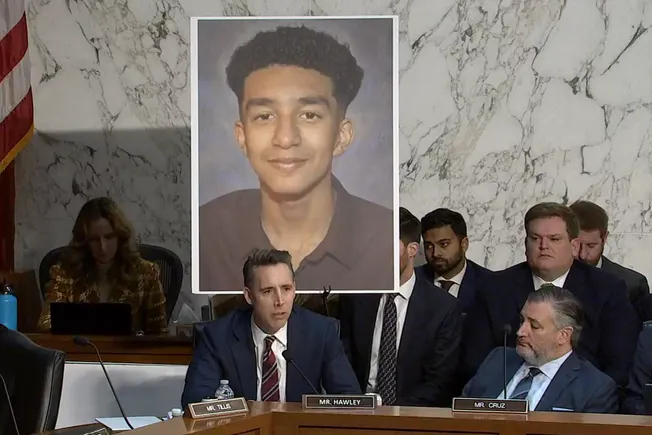

The GUARD Act’s bipartisan advancement follows a September hearing on the harms of AI chatbots, held by the Senate Judiciary Subcommittee on Crime and Counterterrorism. One of the hearing witnesses included Megan Garcia, who testified on the suicide death of her 14-year-old son, Sewell Setzer III, after he interacted with the AI companion tool Character.AI.

During her testimony, Garcia said Setzer “spent his last months being manipulated and sexually groomed by chatbots designed by an AI company to seem human, to gain trust, and to keep children like him endlessly engaged by supplanting the actual human relationships in his life.”

Sen. Josh Hawley, R-Mo., who introduced the GUARD Act in October, mentioned Setzer’s death during the bill’s markup hearing by the Senate Judiciary Committee on Thursday.

“This should not happen in the United States of America, and it certainly should not happen for profit, but that is what is happening today,” Hawley said.

Youth mental health and media safety organizations have also called for children and teens to stay away from AI companions, especially as the apps have become more popular.

For example, 1 in 3 teens said they’ve used AI companions “for social interaction and relationships, including role-playing, romantic interactions, emotional support, friendship, or conversation practice,” a 2025 survey by Common Sense Media found.

What does this mean for schools?

Hawley clarified during the hearing that the GUARD Act would not impact schools using AI chatbots for educational purposes.

As interest grows in schools using AI chatbot tutoring tools — which might take on a persona or identity that students interact with — there can be a “gray area” as to how bills like the GUARD Act could impact classrooms, said Amelia Vance, president of the Public Interest Privacy Center.

State laws similar to the GUARD Act that have passed this year have also explicitly said that the regulations do not apply to AI chatbots used for educational purposes.

This is the case in Washington state, where lawmakers in March passed HB 2225 — a law that requires guardrails for AI companion apps that interact with children and teens. Companies are required to have their AI companions disclose to those under the age of 18 that the chatbot is not human.

These companies must also provide “reasonable measures” to prevent AI companions from generating sexually explicit content or prolonged emotional relationships with children and teens in the state.

However, the Washington law says the law does not apply to AI tools “used specifically for educational purposes and educational entities.” A similar law that passed in Oregon this year also excludes AI companion guardrails for tools used in educational settings.

Other pending legislation

Meanwhile, Sen. Amy Klobuchar, D-Minn., said during the GUARD Act’s markup that she’s “dismayed” that the Senate has not been able to put forward a package of multiple bills that would address use of AI for online exploitation.

Other bills introduced this year seeking to protect children from the risks of AI chatbots include the Youth AI Privacy Act and the Children’s Health, Advancement, Trust, Boundaries, and Oversight in Technology Act.

The Youth AI Privacy Act, introduced in March by Sen. Edward Markey, D-Mass., would require additional safeguards for AI companies’ chatbot interactions with children and teens. These would include AI chatbots only using recently collected data in personalizing responses to younger users and limiting addictive design features that would encourage younger users to interact with them. Chatbots would also be prohibited from advertising to children and teens.

The CHATBOT Act was introduced April 28 by Sens. Ted Cruz, R-Texas; Brian Schatz, D-Hawaii; John Curtis, R-Utah; and Adam Schiff, D-Calif. The bipartisan legislation calls for AI companies to create “family accounts” for parents with children under the age of 13 to oversee and control their AI chatbot usage. The tools would also require parental consent for children to access and be prohibited from targeting advertising to children.